Last week we discussed the concept of multi-start optimization and a simple and effective ILIAD workflow for implementing a multi-start optimization to find the global maximum of a surface. This week we will use ILIAD’s toolkit to look deeper into the strengths and core principles of this optimization technique and compare it with other prevalent methods for nonlinear optimization problems.

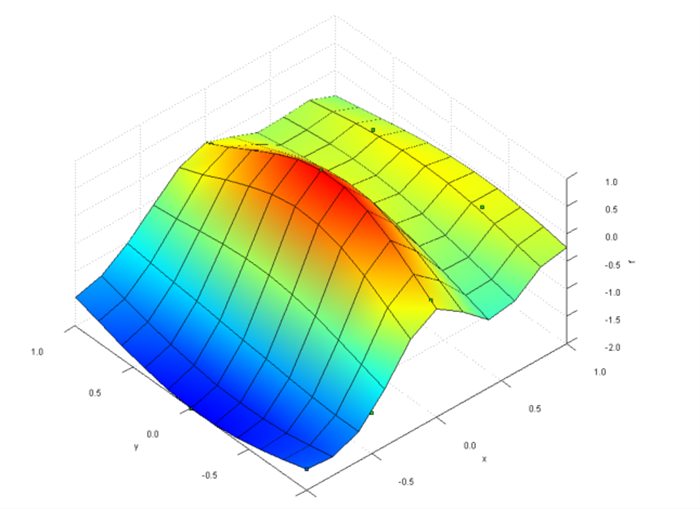

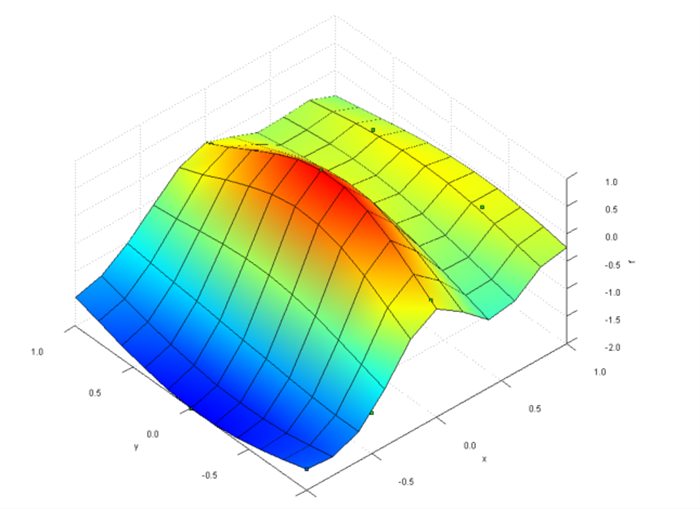

Figure 1: Example surface with a single global maximum and several local extrema.

Figure 2: Approximated contour plot showing all design points from the multi-start optimization.

Figure 3: Approximation of the design space reconstructed from multi-start optimization data.

Connect with us now for complimentary webinars and evaluation software.

Our engineering team can work with you to conduct a Test Case showing how OmniQuestTM will improve your designs, processes and your overall business